DMD – Driving Monitoring Dataset

A multi-modal dataset for different

driver monitoring scenarios

Today’s driving accidents are mainly due to human error. To make roads safer, we present: DMD, a multi-modal dataset that can contribute to the development of Driver Monitoring Systems; this way, we can rely on technology and put a brake on these undesirable statistics.

23

Times

Is the increase in accident risk for Distracted Driving.

-WHO-

ABOUT

Driver Monitoring Dataset

The DMD was devised to address different scenarios in which driver monitoring must be essential in the context of automated vehicles SAE L2-3

FEATURES

Activities Included

DMD Annotations are in VCD (Video Content Description). It supports the description of scenes with spatio-temporal object annotations, and semantics with actions, events and relations between them.

Here there are a few of those annotations available in the DMD.

FEATURES

Activities Included

DMD Annotations are in VCD (Video Content Description). It supports the description of scenes with spatio-temporal object annotations, and semantics with actions, events and relations between them.

Here there are a few of those annotations available in the DMD.

FEATURES

Channels & Scenarios

We recognize the need to include an infrared channel to the dataset for circumstances in which the lighting is not in favor. Also, our data set also contains depth information of the scene, opening possibilities towards a new analysis approach. We bring to you the data you needed in 3 different forms.

CAR SIMULATOR

CAR SIMULATOR

CAR SIMULATOR

SETUP

Recording Set-Ups

For the recordings, 3 of Intel® RealSense™ Depth Camera D400-Series were installed in the car and simulator, and placed correctly to capture images of the driver’s face, body and hands as shown in the figure.

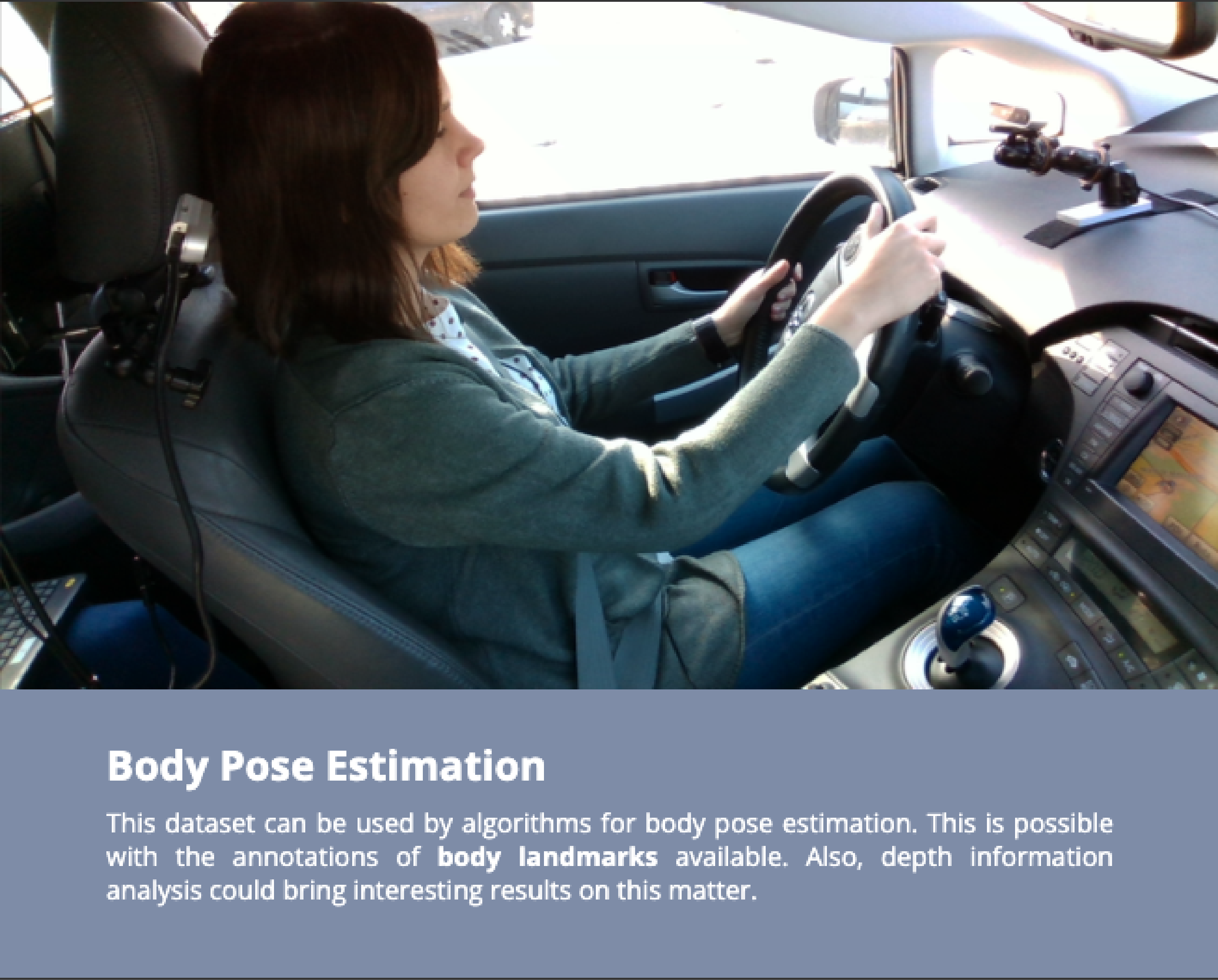

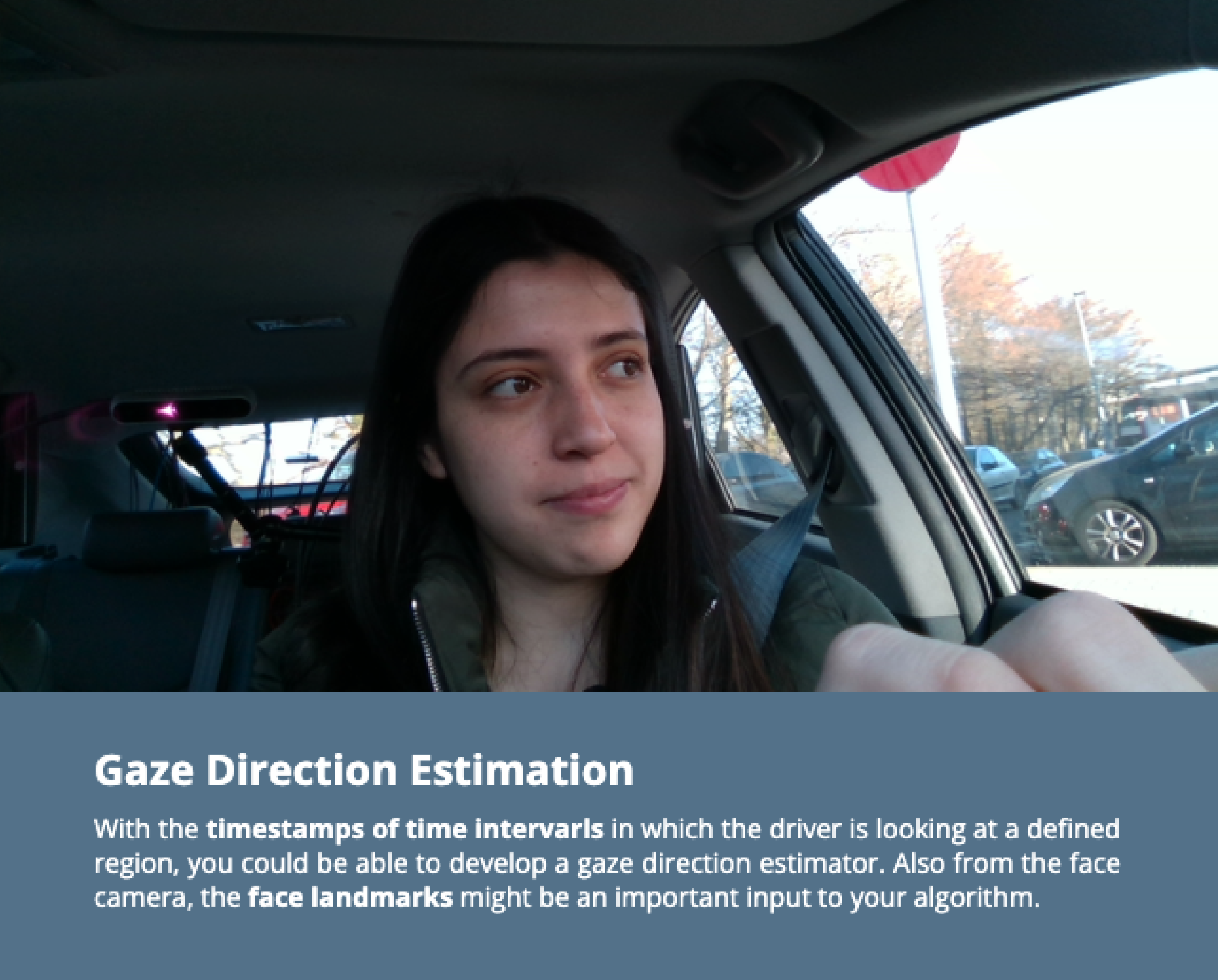

APPLICATIONS

Potential Uses of DMD

The DMD Annotations allow using the material for different research purposes and fields.

TOOLS.

TaTo and DEx

Our team had developed a couple of open-source tools dedicated to DMD annotation (TaTo) and exploration (DEx). With TaTo, you can edit, adapt o create new temporal annotations of the DMD. And, if you want to access the annotations and prepare material for training ML algorithms, DEx will do it for you.

«We believe this collaboration will play a crucial role in making our roads safer for everyone around the world.»

Annotations are being made in collaboration with Deepen AI, a data labeling company specialized in computer vision solutions.

REFERENCES

Cite us!

We have presented DMD on this paper. It would be awesome if you reference this dataset on your work.

The DMD Team thank you.

Other publications regarding the DMD:

- Cañas, P. N., Artiles, A. H., Nieto, M., Rodríguez, I. (2026). Exploring visual language models for driver gaze estimation: A task-based approach to debugging AI. Computer Vision and Image Understanding, 263, 104593. doi:10.1016/j.cviu.2025.104593

- Cañas P.N., García M., Aranjuelo N., Nieto M., Iglesias A. and Rodríguez I. (2023) Dynamic Risk Assessment Methodology with an LDM-Based System for Parking Scenarios. IEEE 26thInternational Conference on Intelligent Transportation Systems (ITSC), Bilbao, Spain, 2023,pp. 5034-5039, doi: 10.1109/ITSC57777.2023.10422385

- Urselmann, T., Cañas, P.N., Ortega, J.D., & Nieto, M. (2022). Semi-automatic Pipeline for Large-Scale Dataset Annotation Task: A DMD Application. ECCV Workshops.

- Ortega J., Cañas P., Nieto M. and Otaegui O, and Salgado L. (2022). Challenges of Large-Scale Multi-Camera Datasets for Driver Monitoring Systems. Sensors 22, no. 7: 2554. https://doi.org/10.3390/s22072554

- Cañas P., Ortega J., Nieto M. and Otaegui O. (2021). Detection of Distraction-related Actions on DMD: An Image and a Video-based Approach Comparison.In Proceedings of the 16th International Joint Conference on Computer Vision, Imaging and Computer Graphics Theory and Applications – Volume 4: VISAPP, ISBN 978-989-758-488-6, pages 458-465. DOI: 10.5220/0010244504580465

DOWNLOAD

I Need the DMD!

Download Complete Dataset

You must be 18 or older to download. This dataset can only be used for academic purposes. Any other use beyond that is prohibited. To ensure the continuous development of this project, we demand a responsible use of the data you are about to acquire.

This dataset is published under the Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 License.

If you have any questions contact us at info-dmd@vicomtech.org.

«IMPORTANT: Due to licence revision, material from specific participants has been removed and will no longer be public. In addition, serving and maintaining a dataset of this size required a significant effort and affected its accessibility. For this, we decided to reduce the content. Recordings done in the simulator will not be public, and IR and Depth material will also be removed to facilitate the dataset downloading process. Only RGB material will be available.»

Legal

All texts, pictures and other works published on the website are – unless stated otherwise – subject to the copyright of Vicometch. Any duplication, distribution, storage, transmission, transmission and reproduction or transfer of the contents is expressly prohibited without the written permission of Vicomtech.

Vicomtech assumes no responsibility for the correctness and completeness of the information on these pages and dataset.

Licence

All datasets on this page are copyright by Vicomtech and published under the Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 License. This means that you must attribute the work in the manner specified by the authors, you may not use this work for commercial purposes and if you remix, transform, or build upon the material, you may not distribute the modified material.

Privacy

Found yourself in the dataset material? If you want more information on how your data is being stored and/or processed, or you want it to be removed from the dataset, please write to the contact email. (Article 15 & 17 GDPR)

The VI-DAS Project has received funding from the European Union’s Horizon 2020 research and innovation programme under grant agreement No 690772.

The DMD has been improving during the Aware2All Project This project has received funding from the European Union’s Horizon Europe research and innovation programme under grant agreement number 101076868.

The DMD was created within the framework of the VI-DAS Project led by Vicomtech. Intel® was part of the consortium and is also a contributor to the DMD.